Record Consistency Analysis Batch – Puritqnas, Rasnkada, reginab1101, Site #Theamericansecrets

The record consistency analysis batch examines data from Puritqnas, Rasnkada, reginab1101, and Site #Theamericansecrets with a methodical approach. It identifies cross-source discrepancies, aligns timestamps and schemas, and performs record-level audits to ensure traceability. Root causes such as uneven mappings and incomplete provenance are documented along with downstream implications. Practical reconciliation steps—governance, profiling, cleansing, validation, and documentation—are outlined, but unresolved questions remain that invite careful consideration of next actions.

What Is Record Consistency Analysis for Puritqnas and Friends

Record consistency analysis for Puritqnas and Friends evaluates how uniformly data and records related to the group are produced, stored, and maintained across sources.

The methodical assessment identifies data reconciliation needs, aligns source definitions, and documents provenance.

Findings emphasize reproducibility and traceability, enabling reliable cross-source comparisons.

This framework supports freedom through transparency and rigorous, evidence-based record consistency practices.

How We Detect Inconsistencies Across Puritqnas, Rasnkada, reginab1101, Site Theamericansecrets

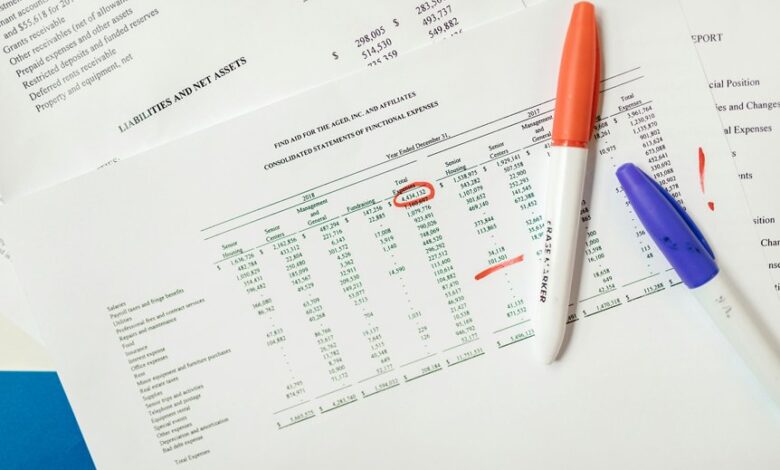

To establish comparable data across Puritqnas, Rasnkada, reginab1101, and Site Theamericansecrets, the methodology builds on prior work defining data provenance and alignment.

The approach identifies inconsistency patterns through cross-source comparisons, timestamp and schema harmonization, and record-level audits.

Data reconciliation then resolves discrepancies via rule-based prioritization, metadata traces, and flagging for manual review, ensuring transparent, reproducible consistency assurances.

Root Causes and Impact on Downstream Analytics

Given the cross-source provenance framework and harmonization procedures, the root causes of data misalignment are traced to heterogeneous source schemas, incomplete field mappings, and divergent timestamp formats, which collectively undermine data integrity.

These deficiencies disrupt data governance practices, obstruct accurate data lineage tracing, and propagate errors downstream.

Consequently, analytics outcomes suffer from biased aggregations, delayed decisions, and diminished stakeholder confidence across interconnected systems.

Practical Steps to Reconcile Data and Improve Quality

Efforts to reconcile data and elevate quality should follow a structured, evidence-based workflow that targets root causes identified in prior analyses.

The practical steps emphasize data governance to establish ownership, accountability, and standards, plus data profiling to quantify quality gaps.

Implement iterative cleansing, standardization, and validation, measure improvements, document decisions, and sustain governance to ensure durable, transparent, and scalable data quality across domains.

Conclusion

In this meticulous audit, uniformity gleams on the surface while discrepancies nap quietly beneath. The data, dutifully tagged and timestamped, presents itself as flawless—until cross-source provenance reveals the charming illusion. Root causes—ambiguous schemas, patchy mappings—reveal themselves with the gentleness of a kindly inspector. Yet the recommended steps—governance, profiling, cleansing, validation, documentation—promise durable order. Ironically, the more we standardize, the clearer the gaps become, showing that harmony is less a state than a disciplined, ongoing effort.